Table of Contents

Theoretical foundation of unbiased estimation of health care productivity

To check our central hypothesis—that autonomous AI improves healthcare method productivity—in an unbiased method, we produced a health care productivity model dependent on rational queueing concept30, as commonly utilised in the health care functions administration literature31. A healthcare provider system, which can be a hospital, an personal medical doctor providing a support, an autonomous AI giving a assistance at a performance degree at minimum or increased than a human pro, a mixture thereof, or a countrywide healthcare method, are all modeled as an “overloaded queue,” dealing with a probable demand from customers that is increased than its ability that is, Λ ≫ μ, in which Λ denotes the total need on the method – patients seeking care—and μ denotes the optimum amount of patients the procedure can serve for every device of time. We define program productivity as

$$lambda =fracn_qt,$$

(1)

in which nq is the variety of people who completed a care face with a excellent of care that was non-inferior to q, and t is the size of time in excess of which nq was calculated, letting for devices that consist of autonomous AI in some vogue. When the standard definitions of healthcare labor efficiency, this kind of as in Camasso et al.7, ignore good quality of care, q assumes high quality of care non-inferior to the case when care is provided by a human pro, such as a retina expert, to handle likely considerations about the basic safety of healthcare AI8: Our definition of λ, as represented by Eq. (1), ensures that good quality of care is either preserved or enhanced.

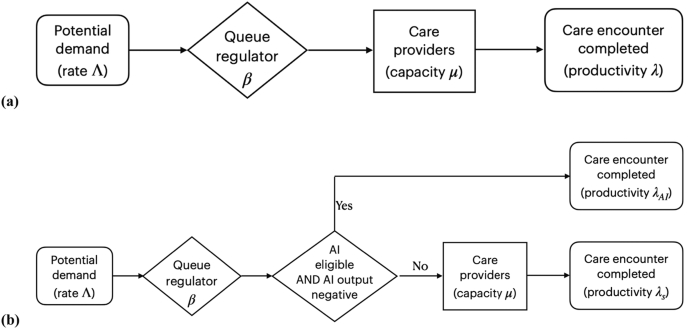

β denotes the proportion of clients who receive and entire the care come upon in a regular condition, in which the common range of people who properly complete the care experience is equivalent to the typical selection of sufferers who acquire obtain to treatment, per device of time, in other text, λ = β · Λ. See Fig. 3. Recall that in the overloaded queue design, there are lots of patients 1-β⋅Λ who do not get entry. β is agnostic about the unique way in which obtain is determined: β may consider the form of a hospital administrator who establishes a optimum quantity of individuals admitted to the system or in the kind of obstacles to care—such as an incapability to pay out, travel very long distances, take time off operate or other sources of health and fitness inequities—limiting a affected person getting entry to the technique. As talked about, λ is agnostic on no matter whether the care come upon is executed and accomplished by an autonomous AI, human vendors, or a combination thereof, as from the individual viewpoint, we measure the quantity of individuals that total the proper amount of care for each device time at a effectiveness stage at minimum or greater than human doctor. Not each individual will be suitable to start out their face with autonomous AI, and we denote by α, 0 < α < 1 the proportion of eligible patients, for example, because they do not fit the inclusion criteria for the autonomous AI not every patient will be able to complete their care encounter with autonomous AI when the autonomous AI diagnosed them with disease requiring a human specialist, and we denote by γ, 0 < γ < 1, the proportion of patients who started their care encounter with AI, and still required a human provider to complete their encounter. The proportion α(1-γ) are diagnosed as “disease absent” and start and complete their encounter with autonomous AI only, without needing to see a human provider. For all permutations, productivity λ is measured as the number of patients who complete a provided care encounter per unit of time, with λC, the productivity associated with the control group, where the screening result of the AI system is not used to determine the rest of the care process, and λAI, the productivity associated with the intervention group, where the screening result of the AI system is used to determine the rest of the care process, and where the AI performance is at least as high as the human provider.

a Mathematical model of ‘overloaded queue’ healthcare system in order to estimate productivity as λ = β Λ. without observer bias. b Model of ‘overloaded queue’ healthcare system where autonomous AI is added to the workflow.

Because an autonomous AI that completes the care process for patients without disease—typically less complex patients—as in the present study, will result in relatively more complex patients to be seen by the human specialist, we calculate complexity-adjusted specialist productivity as

$$lambda _ca=fracbarcn_qt,$$

(2)

with (barc) the average complexity, as determined with an appropriate method, for all n patients that complete the care encounter with that specialist. This definition of λca, as represented by Eq. (2), corrects for a potentially underestimated productivity because the human specialist sees more clinically complex patients requiring more time than without the AI changing the patient mix.

We focus on the implication Λ ≫ μ in other words, that system capacity is limited relative to potential demand, as that is the only way in which λc and λAI, can be measured without recruitment bias, i.e., in a context where patients arrive throughout the day without appointment or other filter, as is the case in Emergency Departments in the US, and almost all clinics in low- and middle-income countries (LMICs). This is not the case, however, in contexts where most patient visits are scheduled, and thus β cannot be changed dynamically, and measuring λ in such a context would lead to bias. Thus, we selected a clinic with a very large demand (Λ), Deep Eye Care Foundation (DECF) in Bangladesh, for the trial setting in order to avoid recruitment bias.

Trial design

The B-PRODUCTIVE (Bangladesh-PRODUCTIVity in Eyecare) study was a preregistered, prospective, double-masked, cluster-randomized clinical trial performed in retina specialist clinics at DECF, a not-for-profit, non-governmental hospital in Rangpur, Bangladesh, between March 20 and July 31, 2022. The clusters were specialist clinic days, and all clinic days were eligible during the study period. Patients are not scheduled there are no pre-scheduled patient visit times or time slots, instead access to a specialist clinic visit is determined by clinic staff on the basis of observed congestion, as explained in the previous Section.

The study protocol was approved by the ethics committees at the Asian Institute of Disability and Development (Dhaka, Bangladesh # Southasia-hrec-2021-4-02), the Bangladesh Medical Research Council (Dhaka, Bangladesh # 475 27 02 2022) and Queen’s University Belfast (Belfast, UK # MHLS 21_46). The tenets of the Declaration of Helsinki were adhered to throughout, and the trial was preregistered with ClinicalTrials.gov, #NCT05182580, before the first participant was enrolled. The present study included local researchers throughout the research process, including design, local ethics review, implementation, data ownership and authorship to ensure it was collaborative and locally relevant.

Autonomous AI system

The autonomous AI system (LumineticsCore (formerly IDx-DR), Digital Diagnostics, Coralville, Iowa, USA) was designed, developed, previously validated and implemented under an ethical framework to ensure compliance with the principles of patient benefit, justice and autonomy, and avoid “Ethics Dumping”13. It diagnoses specific levels of diabetic retinopathy and diabetic macular edema (Early Treatment of Diabetic Retinopathy Study level 35 and higher), clinically significant macular edema, and/or center-involved macular edema32, referred to as “referable Diabetic Eye Disease” (DED)33, that require management or treatment by an ophthalmologist or retina specialist, for care to be appropriate. If the ETDRS level is 20 or lower and no macular edema is present, appropriate care is to retest in 12 months34. The AI system is autonomous in that the medical diagnosis is made solely by the system without human oversight. Its safety, efficacy, and lack of racial, ethnic and sex bias were validated in a pivotal trial in a representative sample of adults with diabetes at risk for DED, using a workflow and minimally trained operators comparable to the current study13. This led to US FDA De Novo authorization (“FDA approval”) in 2018 and national reimbursement in 202113,15.

Autonomous AI implementation and workflow

The autonomous AI system was installed by DECF hospital information technology staff on March 2, 2022, with remote assistance from the manufacturer. Autonomous AI operators completed a self-paced online training module on basic fundus image-capture and camera operations (Topcon NW400, Tokyo, Japan), followed by remote hands-on training on the operation by representatives of the manufacturers. Deployment was performed locally, without the physical presence of the manufacturers, and all training and support were provided remotely.

Typically, pharmacologic pupillary dilation is provided only as needed during use of the autonomous AI system. For the current study, all patient participants received pharmacologic dilation with a single drop each of tropicamide 0.8% and phenylephrine 5%, repeated after 15 min if a pupil size of ≥4 mm was not achieved. This was done to facilitate indirect ophthalmoscopy by the specialist participants as required. The autonomous AI system guided the operator to acquire two color fundus images determined to be of adequate quality using an image quality assessment algorithm, one each centered on the fovea and the optic nerve, and directed the operator to retake any images of insufficient quality. This process took approximately 10 min, after which the autonomous AI system reported one of the following within 60 s: “DED present, refer to specialist”, “DED not present, test again in 12 months”, or “insufficient image quality”. The latter response occurred when the operator was unable to obtain images of adequate quality after three attempts.

Participants

This study included both physician participants and patient participants. Physician participants were retina specialists who gave written informed consent prior to enrollment. For specialist participants, the inclusion criteria were:

-

Completed vitreoretinal fellowship training

-

Examined ≥20 patients per week with diabetes and no known DED over the prior three months

-

Performed laser retinal treatments or intravitreal injections on at least three DED patients per month over the same time period.

Exclusion criteria were:

‘AI-eligible patients’ are clinic patients meeting the following criteria:

-

Presenting to DECF for eye care

-

Age ≥ 22 years. While preregistration stated participants could be aged ≥18 years, the US FDA De Novo clearance for the autonomous AI limits eligibility to ≥22 years

-

Diagnosis of type 1 or type 2 diabetes prior to or on the day of recruitment

-

Best corrected visual acuity ≥ 6/18 in the better-seeing eye

-

No prior diagnosis of DED

-

No history of any laser or incisional surgery of the retina or injections into either eye

-

No medical contraindication to fundus imaging with dilation of the pupil12.

Exclusion criteria were:

-

Inability to provide informed consent or understand the study

-

Persistent vision loss, blurred vision or floaters

-

Previously diagnosed with diabetic retinopathy or diabetic macular edema

-

History of laser treatment of the retina or injections into either eye or any history of retinal surgery

-

Contraindicated for imaging by fundus imaging systems.

Patient participants were AI-eligible patients who gave written informed consent prior to enrollment. All eligibility criteria remained unchanged over the duration of the trial.

Randomization, masking and concealment

B-PRODUCTIVE was a concealed cluster-randomized trial in which a block randomization scheme by clinic date was generated by the study statistician (JP) on a monthly basis, taking into account holidays and scheduled clinic closures. The random allocation of each cluster (clinic day) was concealed until clinic staff received an email with this information just before the start of that day’s clinic, and they had no contact with the specialists during trial operations. Medical staff who determined access, specialists and patient participants remained masked to the random assignment of clinic days as control or intervention.

Intervention

After giving informed consent, patient participants provided demographic, income, educational and clinical data to study staff using an orally administered survey in Bangla, the local language. Patients who were eligible but did not consent underwent the same clinical process without completing an autonomous AI diagnosis or survey. All patient participants, both intervention and control, completed the autonomous AI diagnostic process as described in the Autonomous AI implementation and workflow section above: the difference between intervention and control groups was that in the intervention group, the diagnostic AI output determined what happened to the patient next. In the control group, patient participants always went on to complete a specialist clinic visit after autonomous AI, irrespective of its output. In the intervention group, patient participants with an autonomous AI diagnostic report of “DED absent, return in 12 months” completed their care encounters without seeing a specialist and were recommended to make an appointment for a general eye exam in three months as a precautionary measure for the trial, minimizing the potential for disease progression (standard recall would be 12 months).

In the intervention group, patient participants with a diagnostic report of “DED present” or “image quality insufficient” completed their care encounters by seeing the specialist for further management. “Seeing the specialist” for not-consented, control group, and “DED present / insufficient” patient participants involved tonometry, anterior and posterior segment biomicroscopy, indirect ophthalmoscopy, and any further examinations and ancillary testing deemed appropriate by the specialist. After the patient participant completed the autonomous AI process, a survey with a 4-point Likert scale (“very satisfied,” “satisfied,” “dissatisfied,” “very dissatisfied”) was administered concerning the participant’s satisfaction with interactions with the healthcare team, time to receive examination results, and receiving their diagnosis from the autonomous AI system.

Study outcomes

The primary outcome was clinic productivity for diabetes patients (λd), measured as the number of completed care encounters per hour per specialist for control / non-AI (λd,C) and intervention / AI (λd,AI) days. λd,C used the number of completed specialist encounters λd,AI used the number of eligible patients in the intervention group who completed an autonomous AI care encounter with a diagnostic output of “DED absent”, plus the number of encounters that involved the specialist exam. For the purposes of calculating the primary outcome, all diabetes patients who presented to the specialty clinic on study days were counted, including those who were not patient participants or did not receive the autonomous AI examination.

One of the secondary outcomes from this study was λ for all patients (patients both with and without diabetes) measured as the number of completed care encounters per hour per specialist by counting all patients presenting to the DECF specialty clinic on study days, including those without diabetes, for control (λC) and intervention days (λAI). Complexity-adjusted specialist productivity λca was calculated for intervention and control arms by multiplying (λd,C) and (λd,AI) by the average patient complexity (barc).

During each clinic day, the study personnel recorded the day of the week and the number of hours that each specialist participant spent in the clinic, starting with the first consultation in the morning and ending when the examination of the last patient of the day was completed, including any time spent ordering and reviewing diagnostic tests and scheduling future treatments. Any work breaks, time spent on performing procedures, and other duties performed outside of the clinic were excluded. Study personnel obtained the number of completed clinic visits from the DECF patient information system after each clinic day.

At baseline, specialist participants provided information on demographic characteristics, years in specialty practice and patient volume. They also completed a questionnaire at the end of the study, indicating their agreement (5-point Likert scale, “strongly agree” to “strongly disagree”) with the following statements regarding autonomous AI: (1) saves time in clinics, (2) allows time to be focused on patients requiring specialist care, (3) increases the number of procedures and surgeries, and (4) improves DED screening.

Other secondary outcomes were (1) patient satisfaction (2) number of DED treatments scheduled per day and (3) complexity of patient participants. Patient and provider willingness to pay for AI was a preregistered outcome, but upon further review by the Bangladesh Medical Research Council, these data were removed based on their recommendation. The complexity score for each patient was calculated by a masked United Kingdom National Health Service grader using the International Grading system (a level 4 reference standard24), adapted from Wilkinson et al. International Clinical Diabetic Retinopathy and Diabetic Macular Edema Severity Scales31 (no DED = 0 points, mild non-proliferative DED = 0 points, moderate or severe non-proliferative DED = 1 point, proliferative DED = 3 points and diabetic macular edema = 2 points.) The complexity score was summed across both eyes.

Power calculation

The null hypothesis was that the primary outcome parameter λd, would not differ significantly between the study groups. The intra-cluster correlation coefficient (ICC) between patients within a particular cluster (clinic day) was estimated at 0.15, based on pilot data from the clinic. At 80% power, a two-sided alpha of 5%, a cluster size of eight patients per clinic day, and a control group estimated mean of 1.34 specialist clinic visits per hour (based on clinic data from January to March 2021), a sample size of 924 patients with completed clinically-appropriate retina care encounters (462 in each of the two study groups) was sufficient to detect a between-group difference of 0.34 completed care encounters per hour per specialist (equivalent to a 25% increase in productivity λd,AI), with autonomous AI.

Statistical methods

Study data were entered into Microsoft Excel 365 (Redmond, WA, USA) by the operators and the research coordinator in DECF. Data entry errors were corrected by the Orbis program manager in the US (NW), who remained masked to study group assignment.

Frequencies and percentages were used to describe patient participant characteristics for the two study groups. Age as a continuous variable was summarized with the mean and standard deviation. The number of treatments and complexity score were compared with the Wilcoxon rank sum test since they were not normally distributed. The primary outcome was normally distributed and compared between study groups using a two-sided Student’s t-test, and 95% confidence intervals around these estimates were calculated.

The robustness of the primary outcome was tested by utilizing linear regression modeling with generalized estimating equations that included clustering effects of clinic days. The adjustment for clustering of days since the beginning of the trial utilized an autoregressive first-order covariance structure since days closer together were expected to be more highly correlated. Residuals were assessed to confirm that a linear model fit the rate outcome. Associations between the outcome and potential confounders of patient age, sex, education, income, complexity score, clinic day of the week, and autonomous AI output were assessed. A sensitivity analysis with multivariable modeling included patient age and sex, and variables with p-values < 0.10 in the univariate analysis. All statistical analyses were performed using SAS version 9.4 (Cary, North Carolina).